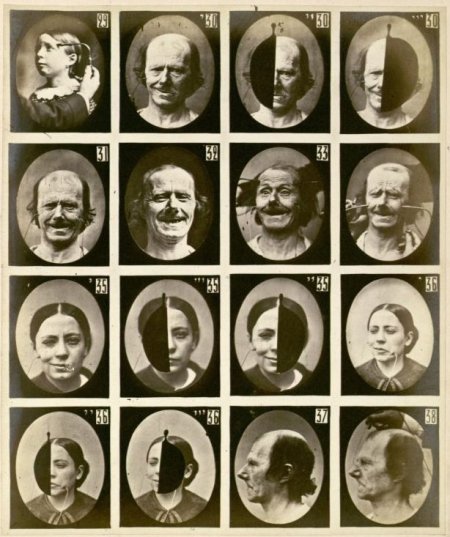

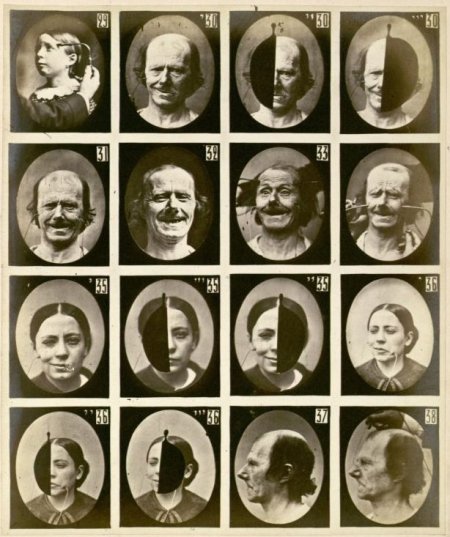

Mécanisme de la Physionomie Humaine by Guillaume Duchenne from wikipedia

Mécanisme de la Physionomie Humaine by Guillaume Duchenne from wikipedia

The face of a human (lets include the ears) is the part of a human body which is usually adressed first as an interface to the human mind and body behind it. And most often it stays the main interface to be used by other humans (and animals). After a first contact people may shake hands a.s.o. but still the face is usually the starting point for facing each other and together with subtle gestures it can give way to a very fast judgements about the personality of people.

So it is no wonder that a portrait of a person almost always includes the face. Faces usually move and the movement is very important in the perception of a face. However in a portrait painting or a portrait fotograph there is no movement and – still – portraits describe the person behind the face – at least to a certain extend. It is also a wellknown rumour (I couldnt find a study on it) that a drawing reflects the painter to a certain extend, like e.g. fat artists apparently tend to draw persons more solid then thin artists a.s.o.

So it is no wonder that people try to find laws, for e.g. when a (still) face looks attracting to others and when not. Facial expressions (see above image) play a significant role (see also this old randform post). But also cultural things etc. are important. But still – if we assume to have eliminated all these factors as best as possible (by e.g. comparing bold black and white faces of the same age group looking emotionless) – then is there still a link between the appearance of a face and the interpretation of the human character behind the face? How stable is this interpretation, like e.g. when the face was distorted by violence or an accident? How much does the physical distortion parallel the psychological?

All these studies are of course especially interesting when it comes to constructing artificial faces, like in virtual spaces or for humanoid robots (e.g. here) (see also this old randform post).

Similar questions were also studied in a nice future face exhibition at the science museum in London organized by the Wellcome Trust.

An analytical method is to start with proportions, where there are some prominent old works, like Leonardo’s or Duerer’s studies, leading last not least to e.g. studies in artificial intelligence which for example link “beautiful” proportions to the low complexity of the corresponding encoded information.

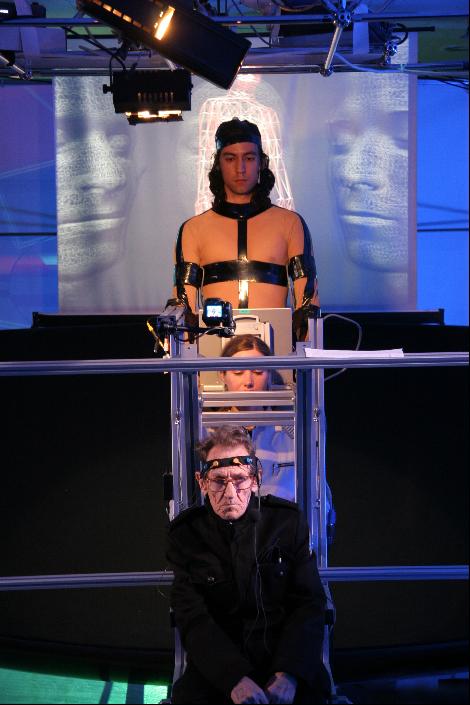

These questions are a bit related to the question of how interfaces are related to processes of computing, also if one doesnt just think of robots. It concerns also questions of Human Computer Interactions as we saw above and finally Human Computer Human Interactions, which were thematized e.g. in our work seidesein.

update June 14th, 2017: according to nytimes (original article) researchers from caltech have apparently found the way how macaque monkeys encode images of faces in their brain. The article describes that the patterns of how 200 brain cells were firing could be translated into deviations form a “standard face” along certain axes, which span 50 dimensions, from the nytimes:

“The tuning of each face cell is to a combination of facial dimensions, a holistic system that explains why when someone shaves off his mustache, his friends may not notice for a while. Some 50 such dimensions are required to identify a face, the Caltech team reports.

These dimensions create a mental “face space” in which an infinite number of faces can be recognized. There is probably an average face, or something like it, at the origin, and the brain measures the deviation from this base.

A newly encountered face might lie five units away from the average face in one dimension, seven units in another, and so forth. Each face cell reads the combined vector of about six of these dimensions. The signals from 200 face cells altogether serve to uniquely identify a face.”

If I haven’t overseen something the article though doesn’t say, how or whether that “standard face” is connected to “simple face dimensions”, i.e. “easy to compute facial features” as mentioned above. By very briefly browsing/ diagonally reading in the original article I understand that the researchers pinpointed 400 facial features, 200 for shape and 200 for appearances and then looked in which directions those move for a set of faces, then extracted those “move directions” via a PCA and then noticed that specific cells first reacted mostly only to 6 dimensions and secondly that the firing rate varied, which apparently allowed to encode specific faces in a linear fashion in this 50 dimensional space. I couldn’t find out in this few minutes reading whether the authors give any indication on how e.g. the “shape points” (figure 1a in the image panel) move when moving along one of the 25 shape dimensions, i.e. in particular wether some kind of Kolmogorov complexity features could be extracted (as it seems to be done here) or not.

It is also unclear to me what these new findings mean for the “toilet paper wasting generation” in China.

By the way in this context I would like to link to our art work CloneGiz.

Mécanisme de la Physionomie Humaine by

Mécanisme de la Physionomie Humaine by